AI is fundamentally changing the landscape of software development. There are risks to the business models and monetization structures behind the companies building the AI superstructures. There are also fundamental changes to the way we build software and that increased speed of delivery shifting the conversations engineering, product, and the business need to have (which I will put in a pin in and probably take up in a different post). In this post, I want to talk abouts the impacts to existing software offerings and companies, regardless of whether they start to use AI tooling or AI-first approaches.

Killing SaaS

There are a thousand think pieces out there about how AI will be the death of SaaS. While I agree with some of their premises, I suspect both the causes and effects are overblown in part for social media consumption. I think we are also mixing up changing barriers to entry, shifting interaction patterns, business model valuation reevaluation, and software structures needs; pointing at them and call it all “SaaS”. You’ve come to my blog so you should know, by now, you are up for a history lesson.

We use the term “SaaS” synonymously for both a software delivery method and a pricing model. But they are very different things. The software delivery method was a key innovation that enabled the pricing model, but you can have software delivered over the internet without pricing it as a per seat “rental software” model. So, let’s talk about them separately.

What is SaaS?

The software delivery method is simply a shift from providing software via an installation on a computer to accessed through a web browser. This was a seismic change in the power of applications, who was bearing the cost of processing, where the data was owned and stored, and the “ownership” of that software. I used to buy Microsoft Word on a flippy disk and install it on my Intel 486 computer with a 32-bit CPU named “Bride of Frankenstein” (because my brother built it from several other computers). I could, technically, still find that old disk and attempt to install it on my modern laptop and still have the rights to do it. That’s because when I saved up my allowance to buy my very first word processor, I was sold a perpetual license to that software represented by that disk and the fancy embossed documents that came with it. Microsoft built the software and then pressed a certain number of copies of it.

Starting in the 1960s, folks in computer science started to experiment with the concept of “time-sharing” e.g. allowing multiple users to have access to the mainframe computer remotely. In the late 1990s, the internet arises, first with the World Wide Web in 1989, the first browser in late 1990, the first webpage (Berners-Lee’s info.cern.ch, a guide to what the WWW was and how to use it) followed by CGI (Common Gateway Interface, a set of rules for how web servers interface with external programs) in 1993. By 1995, we have JavaScript so developers can add dynamic elements beyond text including input validation and animations (which has been the bane of every developer since) and by 1998 XML which allowed data to be requested from a server in the background (which later became AJAX, the second bane of every developer since). Also, in the 90s we get the emergency of ASPs (application service providers, the ability for a vendor to host the software) which leads to the 1998 introduction of NetSuite, the first cloud-based business management application. This means by the end of the 1990s we had the makings of “Web 2.0”: the interactive internet.

In 1999, Salesforce.com is founded, often credited with being the first “pure” SaaS. Here’s the thing about Salesforce. It was (probably) the first web application that was built first as a web application, as opposed to Microsoft Word that started as a desktop application and later transitioned to a hybrid model (desktop + web based). There was never an “on-prem” version of Salesforce. It was always designed to only be cloud-based specifically to compete against on-premises competitors.

What in SaaS is at Risk

There are two things this fundamentally changed. The first was where customers went to engage with their software. Instead of installing a piece of software on their desktop, customers were accessing the software through the internet. This increased the risks associated with poor internet access and the costs of personal computers (my 32-bit CPU Bride of Frankenstein wouldn’t even be able to turn on today. A single tab of LinkedIn is over 1 gig of RAM (someone else’s very opinioned take on this)). Over the last two decades, there has been an interesting shift back to having a “desktop install” of web-based software. I personally prefer my Word with a desktop version even though I know behind the scenes it’s a web app. I don’t like having to hunt for it among my Chrome tabs. The Web Application is here to stay. AI isn’t going to kill the delivery method of SaaS.

The second is the licensing agreement of getting to use the software. Instead of a traditional “I bought this copy” structure of my ancient copy of Word, Salesforce’s contracts were to “rent” access to the software based on usage. This is the business model aspect but it’s also a huge shift in data location and ownership. It massively reduced the upfront cost of software because you didn’t need to have an on-site IT department building and managing servers for your software. It also reduces the cost of ownership of software because it’s now a perpetual license with regular upgrades automatically pushed out (for better or for worse). This structure of licensing gave rise to “per seat” or usage-based fee structures. And that is what those think pieces say is dying. Well, that and the valuation of SaaS based companies.

See, if I see you a piece of software with an annual license based on usage I lock you into those reoccurring costs. This becomes the ARR (annual rate of return) metric I use to valuate my company. I sell you that flippy disk of Word, you may never come back and buy it again. Also, my income is bumpy because I get a lot of income when I sell a version but then it peters out over time until I release Microsoft Office 97 (first one with Clippy!), 2000, XP, 2003, 2007, 2010, 2013, 2016, 2019, 2021. I sell you a perpetual license to Microsoft 365 starting in 2011, it’s going to keep renewing until you cancel. The income from software levels out. The development cycles become continuous but easier to forecast. The valuation of my stock goes up because my income v expenses, e.g. my return on investment, is more reliable.

But why will AI kill SaaS?

Because most SaaS is price on a per seat license. You have one person sit down at a computer and use Word, you pay for one seat. You run a company with 1,700 employees and buy Word for all of them, you pay annually for 1,700 seats. You now start replacing those employees with AI (it seems the headlines on this are a bit inflated) or, more likely, start using an AI agent to directly access information that humans were accessing through your web app (more likely). The ability to charge a per seat may dramatically change. Smart SaaS companies will figure out how to iterate their pricing model to protect where they actually have differentiation (data cleanliness and availability, ease of use, integrations) instead of focusing on valuations based on ARR.

Changing Interaction Patterns

In addition to changing the traditional “per seat” pricing model, AI will also change where and how users interact with an application. For years in software design we have focused on user journeys, interaction language, and how to deliver the right part of the application to the right user at the right time. Web applications have become increasingly complex over the last two decades, serving multiple different types of users trying to complete different jobs to be done, and providing different navigation and models to route those users. Immediately, AI changes the complexity of that problem. Instead of a menu of options, an AI agent can simply ask and then direct users. Longer term, though, there is a high likelihood that users will stop wanting to login to a specific application to do their task and instead want all their activities centralized within an agent driven workflow. Why get information for an order from one system and then login to another system to enter that order? It’s easy to go from that step to users start to fade out of the interaction on some of these activities all together.

How, when, and where users interact with software will fundamentally change. Right now, there are a lot of hypothesis that the AI companies will own that interaction pattern. But while AI web browsers like perplexity are winning on their ability to search and shift the interaction pattern, it’s not clear that users will adopt a one-size-fits-all model. Do you want the information from your Facebook account comingled with your banking details and right next to your work assignment? At the end of the day, interaction patterns really change because the human users make changes. APIs fundamentally changed how data is moved between systems and I AI accelerating some of that but I’m more skeptical about humans being willing to use one application for all their online activities. Google, though, is betting that is the future.

Data and Security

The beating heart of the modern internet is data. Closely related is all the security needed to keep that data safe. SaaS as a delivery method moved the ownership of data into the cloud and with it, and APIs, a million integrations to move data from one system to another. AI consumes that data for everything it does. The better the data, the better the actions the AI can take. As Google well knows, control of the data is control of the internet. To quote the AI summary on the google results “Google’s perspective on data is centered on the belief that data is a vital, transformative resource that, when organized and combined with AI, improves user experiences, enhances business agility, and drives innovation.”

The problem is that most of the companies I have worked at their data isn’t clean. “Is this true?” is a question humans face every day. We have the concept of the “database of record” and even then the same question may have different answers based on the context of the person asking (customer, user, employee), the time it is asked, and the way it is asked. The standardization of APIs resulted in a lot of companies to change the architecture of their applications to make use of a decentralized data source. AI is going to need a similar kind of transformation, built on top of the work that companies did to use microservices and APIs. But I have been inside companies where the center of their system is still a mainframe. They are miles away from AI agents running everything. Even the most sophisticated companies I have worked for, some that were totally greenfield and some that had spent millions rebuilding their data architecture, aren’t ready for the structures needed to make sure the answer is always the same. I see this as the real risk to companies undertaking AI initiatives: they will let the technology get ahead of the ability to deliver actual customer needs.

Reducing barriers to entry

At the same time, AI is hugely reducing the barriers to entry. If we go back to B-school basics, Porter’s five forces model is useful today to understand business pressures: competitive rivals, potential for new entrants, supplier power, customer power, and threats of substitutes. Companies using AI to increase efficiency become more competitive rivals if you aren’t doing the same. But if you do use AI, now those AI companies have huge supplier power (something we touch on before). Killing the SaaS subscription model gives customers more power. But the biggest threat I see from AI is it has hugely decreased the cost for new entrants and increases the available substitutes to your product.

You build an awesome product, let’s say it’s a word processer. You have built it up over decades and have a huge following. Now someone can Vibe Code a replacement in their basement over the weekend. Okay, that might be an exaggeration but the barriers to entry on most industries, and the cost to build a suitable replacement, are hugely decreased. Now we need to think about our differentiation moats as something else besides the features of the software. Which is something we should have always been doing but have gotten lazy. I worked for a company that did websites for hospitals. Our moat was not having a better CMS (content management system). It was that most companies didn’t want to go through the effort of HIPAA compliance and we staffed with people that were expert in a specific industry. Microsoft’s moat around Word is not that it is the best word processor on the market. It’s not. For my creative writing I use Scrivener which is far superior for complex, novel length, writing for publication. Google Docs is far better for business collaboration. OnlyOffice and OpenOffice are better for privacy, security, and document formatting control. Lark is better for integration with chat and project management. Many of the alternative provide basically free migration through compatibility with docx file formats, removing another barrier. No, Microsoft’s moat is prevalence through packaging and brand trust. Pretty much every business uses the Microsoft Office Suite. Because it’s the default and then comes with all the tools. Microsoft shouldn’t, and isn’t, rest on that history. The world is full of examples of once darlings failing to adapt.

All of these factors will create huge challenges for companies now and in the near future. But AI will not be the cause of death of any company in the near future, it will be the failure of a company to understand their fundamentals, adapt to a changing environment, and keeping their customers central to their goals. Disruption is default in business. Adaption is a leader’s job. AI is your friend. It’s true, just ask it. I promise you an interesting philosophical answer.

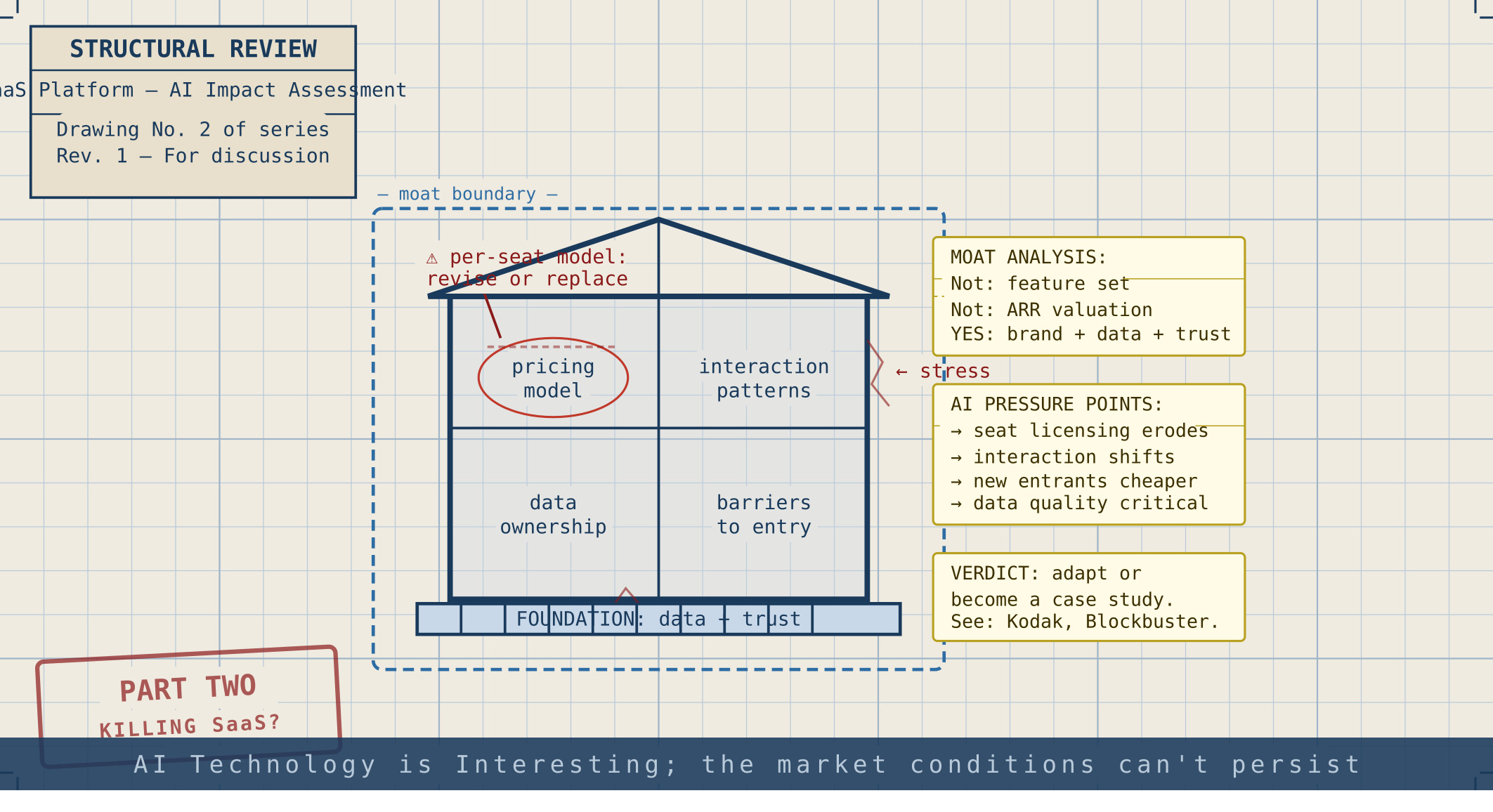

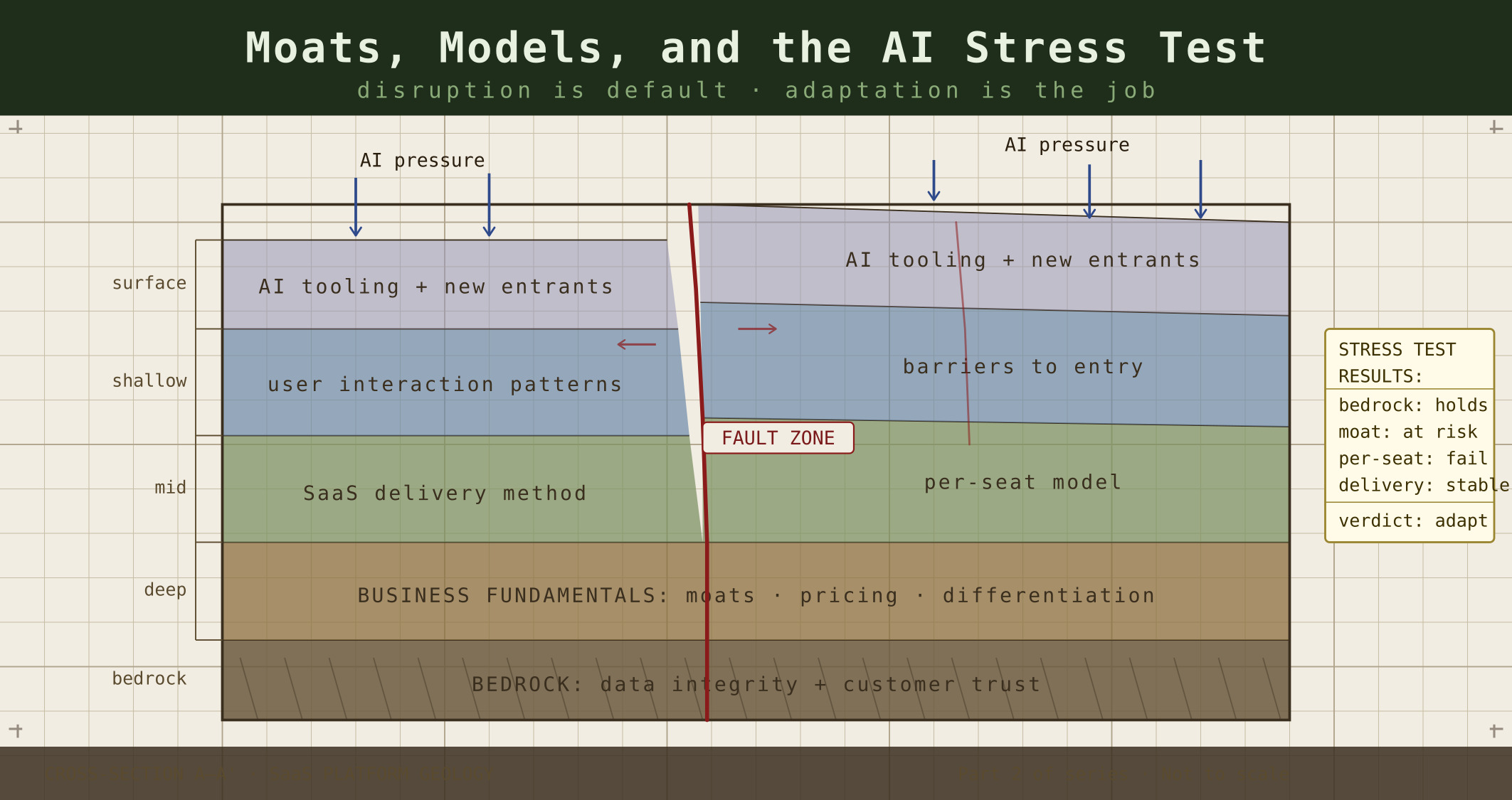

Note: I don’t use AI to help write my posts or create example pictures. I do use AI to create the header image. In this case I prompted Claude (Anthropic), Gemini (Google), ChatGPT (OpenAI) and CoPilot (Microsoft/GitHub) by giving it my blog post as well as the image from the previous post I used. I’m gonna give Claude cred for coming up with multiple good images (seen below) that didn’t really fit the feel I was going to, though I really wanted to use them. Gemini leaned hard into infographics (which seems to be it’s default mode). This time CoPilot won.

I also asked them all to give me a title and LinkedIn summary. Claude, again, won this challenge by asking good questions and effectively finding the thesis.

Claude’s first attempt and second attempts are below. Sadly, it took me a surprising amount of work to figure out how get them from the svg format (which I am sure Claude was doing to make edits easy) to web ready formats… technology’s future is looking bleak…